Overview

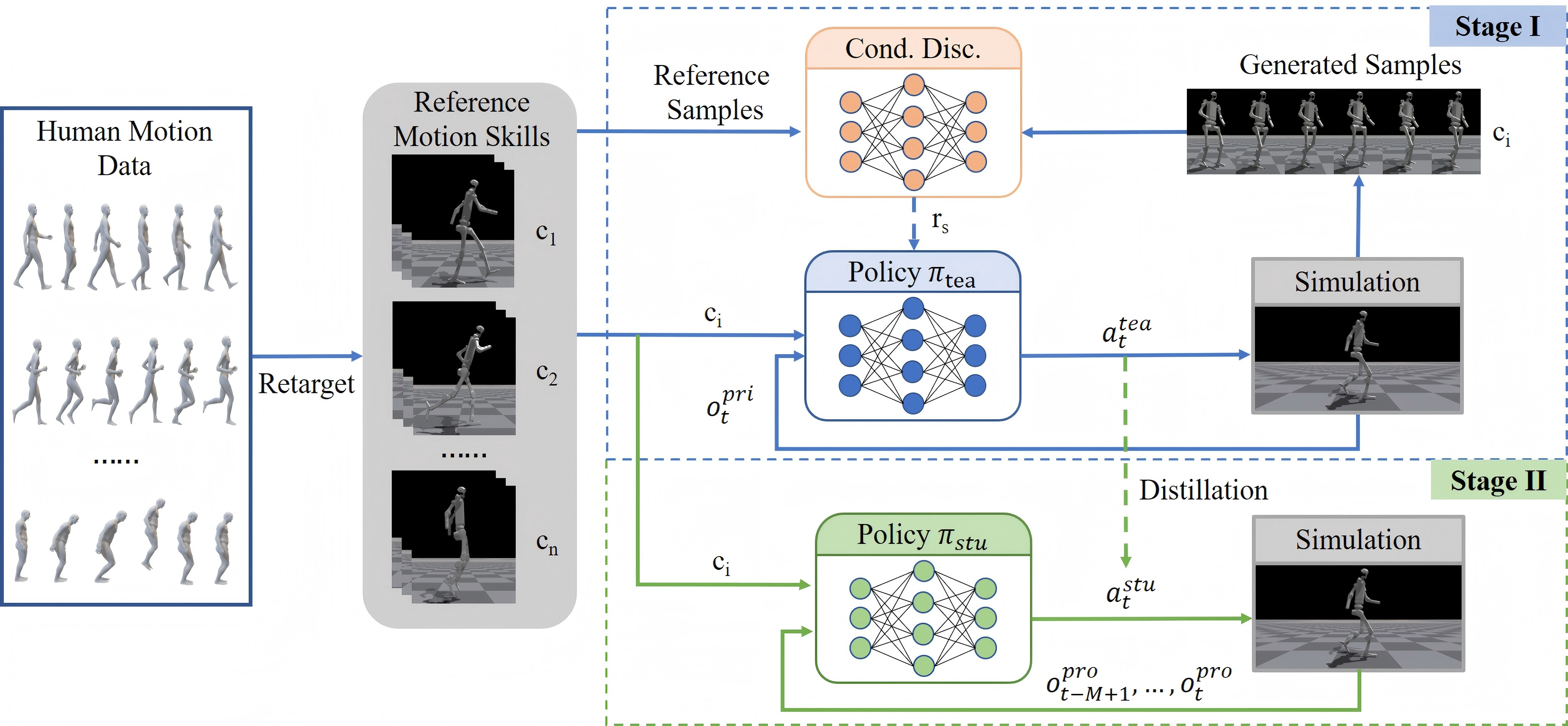

This paper presents HAML, a robust two-stage learning framework designed to translate large-scale, weakly labeled motion datasets into deployable multi-skill humanoid controllers. Addressing the critical challenges of mode collapse in conditional generation and the engineering gap between simulation and reality, the authors propose a scalable system that utilizes coarse clip-level skill labels to minimize annotation effort while ensuring high controllability. The core methodological innovation lies in a condition-aware adversarial training mechanism that injects mismatched transition-label pairs, explicitly penalizing incorrect associations to ensure the discriminator enforces strict instruction compliance. To enable physical deployment, the system employs a teacher-student distillation strategy where a privileged teacher is converted into a student policy operating solely on history-stacked proprioceptive observations, effectively bypassing the need for unreliable external state estimation. Extensive evaluations in simulation and on a physical Unitree G1 robot demonstrate that HAML achieves superior skill coverage and transition stability compared to state-of-the-art baselines, successfully realizing real-time, high-frequency control under onboard hardware constraints.

Visualization of Heading Control

HAML allows finer motion skill control by incorporating additional task commands during policy training.

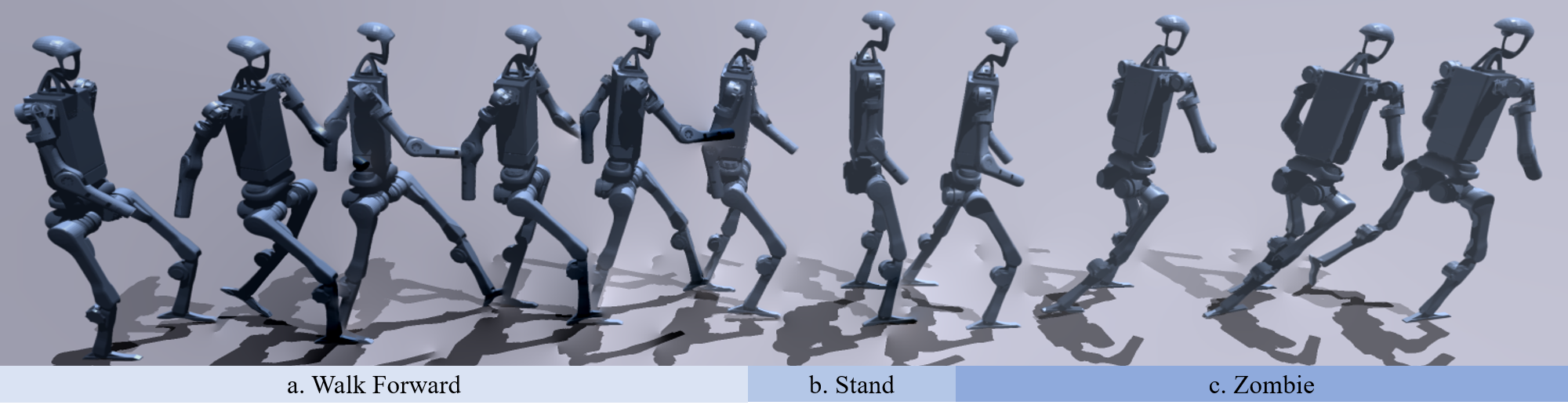

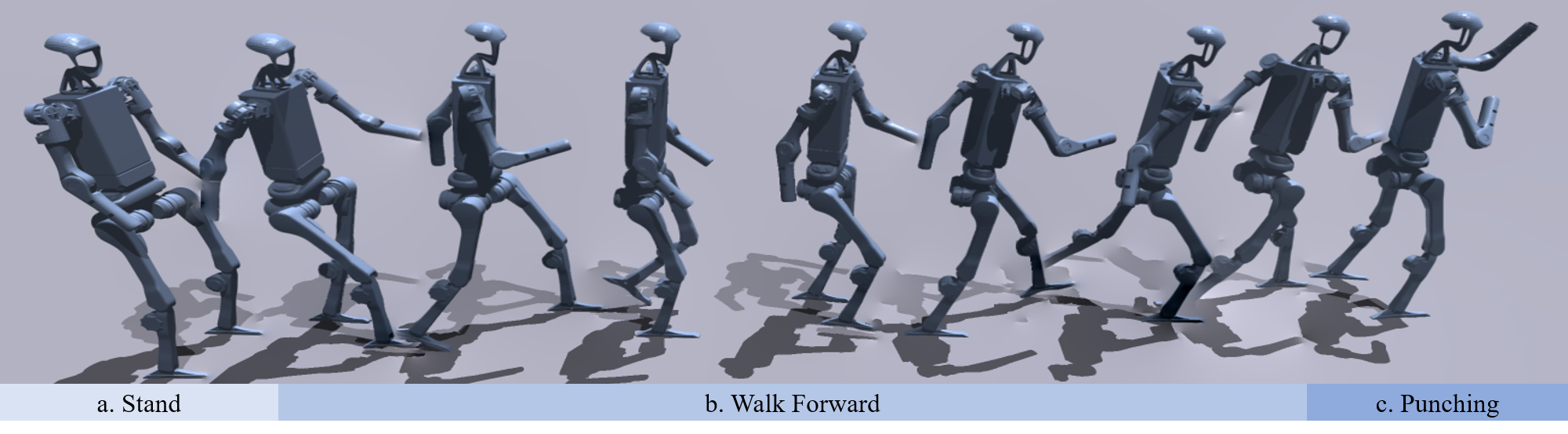

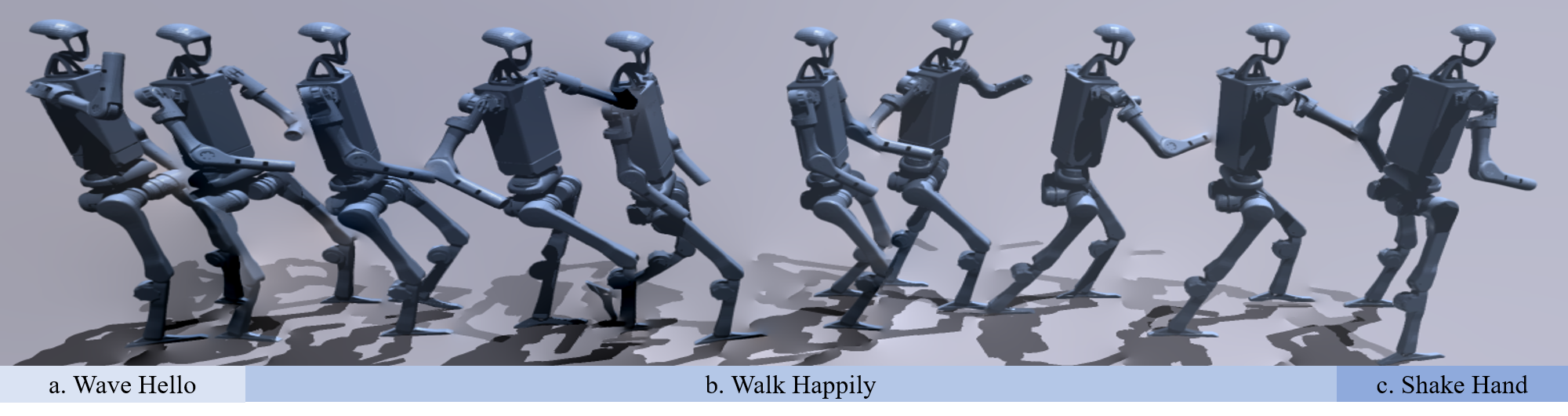

Visualization of Different Tasks

H-Locomotion

H-Multiwalk

Q-Locomotion

H-Interaction

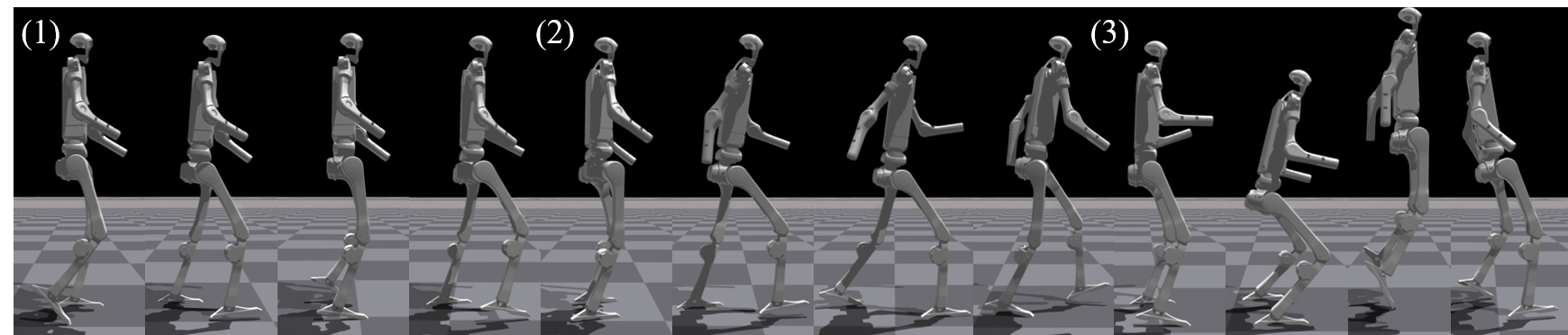

Examples of Motion Transition

Deployment

Wave Hand

Shake Hand

Punch

Stable Stand to Punch